Teen Crisis: The Twenty-Six Words That Shielded Social Media

Part 2, The Forces: A 1996 law built the wall. The platforms built an addiction machine behind it. A 2026 jury cracked it.

In Part Two of our series on social media's impact on teen mental health, we turn to the forces that got us here. How the 1996 law shielded the industry for thirty years. How the platforms, behind that shield, engineered what Facebook’s founding president called a vulnerability in human psychology — and aimed it at children. How former employees broke ranks to expose what the company knew. How parents' lawyers, after years of losing, finally found the theory that worked. And how change is now stirring around the world, except in Washington.

Missed Part One? Go here to unpack the epidemic of depression, anxiety, and, tragically, suicide among a generation of teenagers — and social media’s role in it. Part Three will explore solutions. All series (for reading or listening) are at solvingfor.io.

In October 1994, an anonymous user logged onto a bulletin board called Money Talk and accused a Long Island brokerage firm of running fraudulent IPOs. The firm’s president, the post said, had committed a twenty-two-million-dollar criminal fraud.

The bulletin board was hosted by Prodigy — an early online service similar to AOL, a dial-up walled garden with about two million subscribers. The firm was Stratton Oakmont — the boiler room Jordan Belfort would later memorialize in The Wolf of Wall Street. The president was Danny Porush. The accusations were true. But in 1994, the fraud was still hidden, and Stratton Oakmont sued Prodigy for defamation. Its theory was that because Prodigy moderated its bulletin boards, it was acting as a publisher of its users’ speech.

On May 24, 1995, a New York judge agreed. If Prodigy had ignored its service and let anything through, it would have been protected as a distributor, like a bookstore. Because it had tried to moderate, it had become liable. The ruling created an impossible choice for every online service in America: moderate and be sued, or abandon moderation entirely.

Two members of Congress — Chris Cox, a California Republican, and Ron Wyden, an Oregon Democrat — thought this was insane. A month after the Prodigy decision, they introduced a provision, buried inside the larger Telecommunications Act, whose operative language ran to twenty-six words:

No provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.

Twenty-six words, written in response to a defamation suit by a boiler-room brokerage. Eight years before Facebook. Eleven before the iPhone.

On August 4, 1995, the House voted on the Cox-Wyden amendment. Not a single member spoke against it. The vote was 420 to 4. Six months later, President Clinton signed the Telecommunications Act into law. The Cox-Wyden provision — now designated Section 230 of the Communications Decency Act — took effect with it. Most of the surrounding statute was struck down within eighteen months as unconstitutional. Section 230 survived.

It would come to be called the twenty-six words that created the internet. It would also come to be called the twenty-six words that shielded social media from every parent who tried to hold it accountable for what it did to their child.

The Wall

Cox and Wyden's answer was reasonable for 1995. Let platforms moderate. Hold users responsible for what users say. Keep courts out of policing an emerging medium. For a while, it worked.

Then came the first real interpretation.

Six days after the Oklahoma City bombing in April 1995, an anonymous user posted on an AOL bulletin board advertising T-shirts that celebrated the attack. To order, the post said, call Ken in Seattle — and listed the phone number of Kenneth Zeran, a real man with no connection to the shirts. Within hours he was receiving death threats. He called AOL. AOL took the post down. Another appeared. The harassment continued for weeks.

By the time Zeran sued in 1996, Section 230 had just become law. AOL invoked it. The case reached the Fourth Circuit Court of Appeals.

American law had long distinguished between publishers — liable for everything they put out — and distributors, like bookstores, liable only after being warned. That was the framework Zeran tried to invoke.

In 1997, the court threw it out. Section 230, the judges ruled, immunized AOL not just from being treated as a publisher, but from nearly any civil suit arising from user content — even when notified, even when a reasonable response would have prevented the harm. A bookstore could be sued for knowingly selling a defamatory book. AOL could not be sued for knowingly hosting a defamatory post.

Cox and Wyden had written a shield. Zeran made it a wall.

The industry that grew up behind that wall is the one we live inside now. Facebook launched in 2004. The iPhone arrived in 2007. Instagram in 2010. TikTok, in its current form, in 2017. Every one of them was built on the assumption that whatever the platform hosted, recommended, amplified, or addicted its users to, the company itself could not be sued for it.

Parents tried. In 2006, a thirteen-year-old girl in Texas named only as Julie Doe created a MySpace profile, lied about her age to get past the site’s safety defaults, and was contacted by a nineteen-year-old man who sexually assaulted her in person a few weeks later. Her mother sued MySpace for failing to implement basic safety measures — age verification, default privacy settings for minors. The Fifth Circuit dismissed the case in 2008. Section 230 barred it. The Supreme Court declined to hear the appeal.

This became the pattern. Parents sued platforms for facilitating their children’s exploitation, addiction, harassment, and in some cases suicide. Courts dismissed the cases before discovery, often before the company had to produce a single internal document. The reasoning was nearly always the same: the harm came from content, content came from users, and Section 230 did not permit a court to treat the platform as responsible for either.

By the time the plaintiff known as Kaley filed the lawsuit KGM v Meta Platforms in 2023 — the case that would eventually crack the wall — Facebook alone had more than three billion monthly users. The combined market value of the platforms named as defendants — Meta, Google, Snap and TikTok’s parent ByteDance — exceeded three trillion dollars by the time her case reached trial. Every one of them had been built behind the wall.

The Machine

Behind the wall, they built an addiction machine.

This is not a metaphor. It is how the people who built it described what they were building. In 2017, Sean Parker — Facebook's founding president, who helped shape the company in its earliest years — sat down for a public interview with Axios.

“The thought process that went into building these applications, Facebook being the first of them,” Parker said, “was all about: How do we consume as much of your time and conscious attention as possible?”

The answer, he explained, was dopamine. Give the user a small chemical reward — a like, a comment, a notification. The brain learns to seek the next reward. Parker called it a "social-validation feedback loop," engineered to exploit "a vulnerability in human psychology.”

Then he said the sentence that matters most. “The inventors, creators — it’s me, it’s Mark, it’s Kevin Systrom on Instagram, it’s all of these people — understood this consciously. And we did it anyway.” Asked about the long-term effects on young users, Parker said: “God only knows what it’s doing to our children’s brains.”

This was the founding president of Facebook, in a published interview, describing the platform's design intent. The mechanisms he named — infinite scroll, intermittent variable rewards borrowed from slot machines, notifications engineered to manufacture urgency, beauty filters that drive what clinicians now call "Snapchat dysmorphia," algorithms that learn which content keeps a teenage girl scrolling longest and feed her more of it — were not invented for Facebook. They were borrowed from older industries that had spent the twentieth century learning to capture human attention and hold it.

The platforms did not deploy these mechanisms one at a time. They deployed them simultaneously, on a user base that included tens of millions of children and adolescents whose prefrontal cortex — the part of the brain responsible for impulse control — does not finish developing until somewhere around age twenty-five.

These were not bugs. They were the product. And behind the wall Section 230 built, there was no one to stop them.

The Inside Voices

For years, what the public knew about Meta came from outside the company. That changed in September 2021, when Frances Haugen, a former Facebook product manager, walked out of the company with tens of thousands of pages of internal documents. She gave them to The Wall Street Journal, filed eight SEC complaints, and on October 5, 2021, testified before the Senate Commerce Committee.

The documents were Facebook's own research, on Facebook's own users. One Instagram study found that 13.5 percent of teenage girls in the U.K. said their suicidal thoughts worsened after they started using the platform. Another found 17 percent said the same about their eating disorders. A third found that 32 percent of teenage girls who already felt bad about their bodies said Instagram made them feel worse. The company’s researchers summarized it for internal leadership in a slide deck: “We make body image issues worse for one in three teen girls.”

That sentence appeared on a slide, in a meeting, inside the company, in 2019 — more than two years before the public saw it.

The documents also showed Facebook had known its platform was amplifying hate speech and misinformation around the 2020 U.S. election and the January 6 insurrection, in Ethiopia's civil war, and in the 2017 genocide of the Rohingya in Myanmar. But the documents on teenagers stood out, because they were the cleanest — measured by the company's own researchers, on a defined population, in unambiguous language. That was the thread a plaintiffs' lawyer could pull on.

Drawing a comparison that would define the next phase of the debate, Haugen told senators: “When we realized tobacco companies were hiding the harms [they] caused, the government took action.” Lawmakers from both parties agreed, on camera, that Congress would finally do something. Congress did not do something. The internal research did not produce regulation. It produced a news cycle.

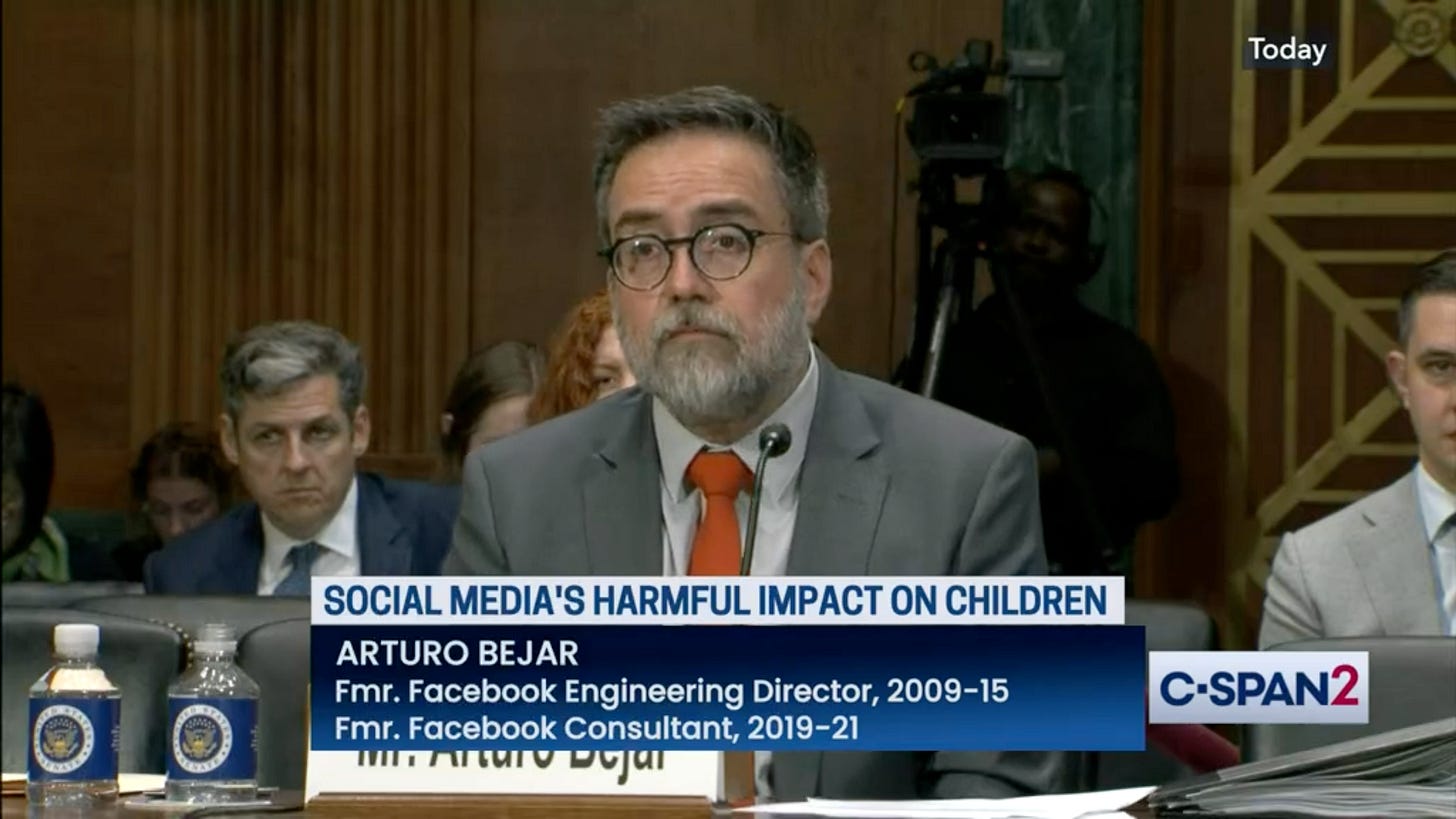

Two years later, a second engineer walked out of Meta. Arturo Béjar was not a junior employee. He had been director of engineering for "Protect and Care" — Meta's user-safety and child-protection team — and returned as a consultant in 2019 to work on adolescent safety.

He came back, he later testified, because of his own daughter. She was fourteen. She and her friends had begun receiving unwanted sexual advances from adult men on Instagram. She had reported the messages. Nothing had happened.

Béjar spent two years collecting data. He found that 51 percent of Instagram users reported a “bad or harmful experience” on the app within the previous week. Among thirteen-to-fifteen-year-olds, one in eight had received unwanted sexual advances on Instagram within the previous seven days. On October 5, 2021 — the same day Haugen was testifying to the Senate — Béjar emailed Zuckerberg. He proposed specific, implementable changes. Zuckerberg never replied.

“Meta’s executives knew the harm that teenagers were experiencing,” Béjar told the Associated Press the week of his own Senate testimony in November 2023. “There were things that they could do that are very doable. And they chose not to do them.”

Haugen had shown the world what Meta’s own research found. Béjar showed the world what happened when someone inside the company tried to act on it. What they exposed inside the Senate, a jury in Los Angeles would confirm under oath four years later.

The Crack in the Wall

For almost thirty years, every parent's lawyer faced the same question: was this a case about content, or something else?

If content — what someone had posted, shared, or recommended — Section 230 applied, and the case was dismissed. The plaintiffs who built the case that became KGM v Meta Platforms argued something different: that the platforms themselves — the code, the features, the design — were defective products, built with full knowledge of what they would do to the children who used them.

The legal theory had a clean analogy. If a carmaker knows its brakes will fail at highway speed and sells the car anyway, the manufacturer can be sued — not for what the driver did, but for how the car was built. What matters is whether the product was unreasonably dangerous for its intended use, and whether the manufacturer knew.

Put simply, it's a case about how the product was designed.

Translate that framework to Instagram, and Section 230 had nothing to say about it. Section 230 protected platforms from being treated as publishers of user content. It did not protect them from being treated as designers of a product. Infinite scroll was not speech. The dopamine-calibrated notification schedule was not speech. The algorithm’s decision to keep a teenage girl on a feed of self-harm videos was engineering. And engineering, in every other industry, could be sued.

Parents’ lawyers had been circling this insight for years. It took a judge willing to let it reach a jury.

In October 2023, Judge Carolyn B. Kuhl of the Los Angeles Superior Court — overseeing thousands of consolidated cases — issued an eighty-nine-page ruling that became the template. She rejected the platforms' Section 230 defense. She rejected their First Amendment defense. She allowed the central theory to proceed: that platforms could be held liable in negligence for their design features and for failing to warn users about the addictive properties of those features.

It was the ruling parents’ lawyers had been waiting a generation for.

Two years later, Kuhl denied the platforms’ motions for summary judgment in three bellwether cases. The first — K.G.M. v. Meta Platforms — went to trial in Los Angeles on January 27, 2026.

The verdict came seven weeks later. The internal documents that Haugen and Béjar had pulled out of Meta — IG is a drug. We’re basically pushers. We make body image issues worse for one in three teen girls — were read aloud in open court, admitted into evidence, handed to a jury of twelve. On March 25, 2026, that jury found Meta and Google liable. The wall Section 230 had erected around the industry — the wall Zeran had made impregnable — did not cover what those companies had chosen to do.

Meta and Google announced within hours that they would appeal. The appeals will take years. The parallel federal trials in Oakland begin in June. Roughly sixteen hundred individual plaintiffs are already lined up behind KGM in the California proceeding alone.

But the wall had cracked. For the first time in the life of the internet, an American jury had looked at the machine that Section 230 had protected, and had said: this was not speech. It was a product, and the company that designed it knew.

The Convergence

In April 1994, seven tobacco CEOs swore under oath before Congress that nicotine was not addictive. The industry's own research had documented the truth for decades. A few months later, a paralegal at Brown & Williamson's law firm anonymously mailed four thousand pages of it to a researcher in California. By 1998, forty-six state attorneys general had signed the Master Settlement Agreement — $206 billion, the largest civil litigation settlement in American history. Before 1994, the tobacco industry had never lost a product-liability suit. After 1994, it lost and kept losing. What changed was not the harm. What changed was the evidence.

Frances Haugen, testifying in 2021, drew the comparison herself. The analogy is not perfect — tobacco took forty years, social media has had two decades since Facebook launched, and Section 230 has functioned as a kind of preemptive immunity tobacco never had. But the shape of the arc is recognizable. The KGM verdict is an early plaintiff win. The documents are being read in open court. More than forty state attorneys general, across the political spectrum, have filed their own lawsuits against Meta since 2023. The pattern that would make a Master Settlement thinkable is assembling.

And it is assembling on more than one front.

Every direction, that is, except Washington. The Kids Online Safety Act passed the Senate in July 2024 by ninety-one to three. It died in the House. Reintroduced in 2025, it was gutted. No Section 230 reform has advanced. The federal government, which alone can regulate the industry at the national level, is — for the foreseeable future — out of the game.

But others are not.

In November 2024, the Australian Parliament passed the first national law in the world to ban children under sixteen from holding social media accounts. It took effect in late 2025. Platforms are required to identify and remove underage users or face fines of up to fifty million Australian dollars. Denmark, France, the U.K., and the European Commission have begun moving in similar directions. New York restricted algorithmic feeds for minors; Utah, Arkansas, California, and Virginia passed their own laws. The pressure is coming from every direction except the federal one.

The whistleblower bookshelf has kept growing. Roger McNamee’s Zucked in 2019. Haugen’s The Power of One, published in 2023. Béjar’s testimony in 2023. In March 2025, Sarah Wynn-Williams, a former Facebook executive, published Careless People, a memoir that described a “lethal carelessness” at the top of the company. It reached number one on The New York Times bestseller list. Meta won an emergency arbitration order barring her from promoting the book, and tried to block her Senate testimony too. What was once an unusual act of corporate conscience has become a genre.

The wall Section 230 built has cracked. What finishes breaking it down, if anything does, will come from the same places it has been coming from — the parents and children who brought the cases, plaintiffs' lawyers, state legislatures, foreign governments, the former employees of the companies themselves. But not the U.S. federal government. At least not soon.

Next: We explore the solutions being implemented — and those still being pursued and imagined.

Note: Prefer to listen? Use the Article Voiceover at the top of the page, or find all narrated editions in the Listen tab at solvingfor.io.

Solving For is a deep-dive series that takes on one pressing problem at a time: what’s broken, what’s driving it, and what a path forward might look like.

Previous series have examined rare earth dominance, AI safety, the decline of local news, the end of amateurism in college sports, shrinking competition in Congress, and a world rearming as the global rules-based order weakens. Learn more at solvingfor.io.